The Anime Alchemist - Issue #3: The Narrative Leap

February 2026 has officially broken the speed limit for AI video. While we were all perfecting our image consistency, the underlying video engines just leaped from "impressive clips" to "narrative-ready masters." We are witnessing the birth of Agentic Cinema—where the AI isn't just a brush, but a full-stack production crew.

Kling 3.0: The multi-shot king

Kuaishou just dropped Kling 3.0, and it’s the most significant update for anime creators to date. The headliner? True Multi-Shot Continuity.

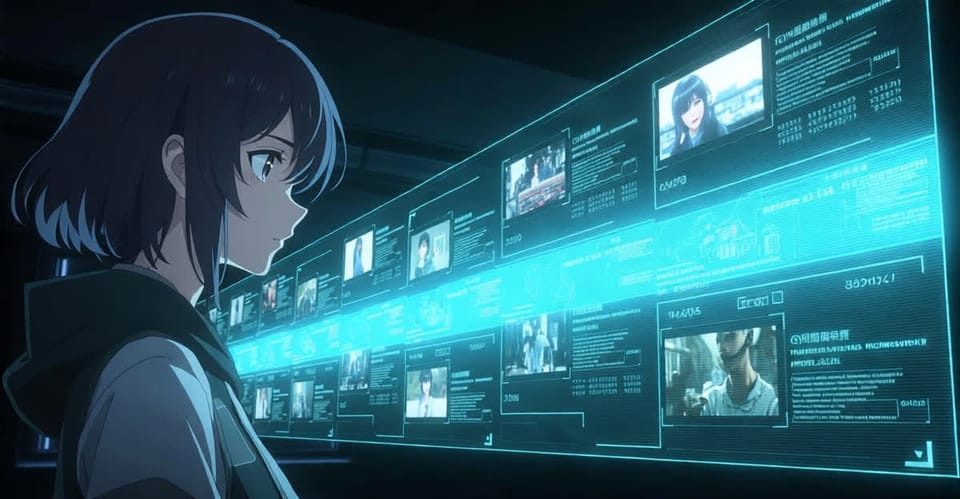

Previously, creating a sequence required manually "bridging" frames between shots to keep a character’s hair or outfit from changing. Kling 3.0 handles this natively. You can now prompt a sequence (up to 15 seconds) that includes wide shots, close-ups, and over-the-shoulder angles while maintaining pixel-perfect subject consistency. For Stoira, this means we can generate entire 30-second character "lore drops" in a single afternoon rather than a week of stitching.

Sora 2 and Veo 3.1: The audio-visual merge

We are finally entering the era of native audio-sync. Four out of the six major models—Kling 3.0, Sora 2, Veo 3.1, and Luma Dream Machine—now support multi-character audio with voice reference.

- Sora 2 has moved beyond silent physics simulations. Its new "Neural Foley" engine predicts what a scene should sound like based on the physics of the objects (e.g., the specific crunch of snow vs. the splash of water).

- Veo 3.1 has doubled down on creative control. It now allows for "timeline scripts" with beat markers, making it the preferred tool for high-energy dance transformations and action loops where timing is everything.

The Stoira View: Beyond the Uncanny Valley

The "Uncanny Valley" has long been the graveyard of AI video projects. At Stoira, our research into Ghibli-refining layers has shown that the solution isn't "more detail," but smarter stylization.

By using Kling 3.0 as our base motion engine and layering our custom Rajput-Anime LoRAs on top, we bypass the "rubbery" look of standard AI video. We are no longer waiting for the technology to reach "perfection"—we are building the architectural vision to make AI-driven anime indistinguishable from traditional top-tier studio work.

Looking Ahead: Agent-Driven Episodes

The barrier isn't the technology anymore; it's the orchestration. We are currently developing a pipeline that takes a single text script and automatically triggers the correct shots in Kling, syncs the dialogue in Veo, and upscales the final master to 4K—all without human clicking.

The Studio of Tomorrow isn't a building; it's a script.

The Anime Alchemist is your guide to the future of animation.